The application of neural network architectures to financial time series analysis represents one of the most significant advances in quantitative finance over the past decade. For UK finance professionals seeking to understand and evaluate AI-driven market analysis tools, a technical yet accessible understanding of these architectures is essential. This article explores the fundamental mechanisms behind LSTM networks, attention mechanisms, and transformer models, providing the knowledge needed to critically assess modern AI analytics tools.

Financial time series data presents unique challenges that distinguish it from other sequential data types. Market data exhibits non-stationarity, high noise levels, complex interdependencies, and regime changes that traditional statistical methods struggle to capture. Neural network architectures, particularly those designed for sequential data, offer powerful capabilities for modeling these complex temporal patterns.

Understanding LSTM Networks: The Foundation of Sequential Financial Analysis

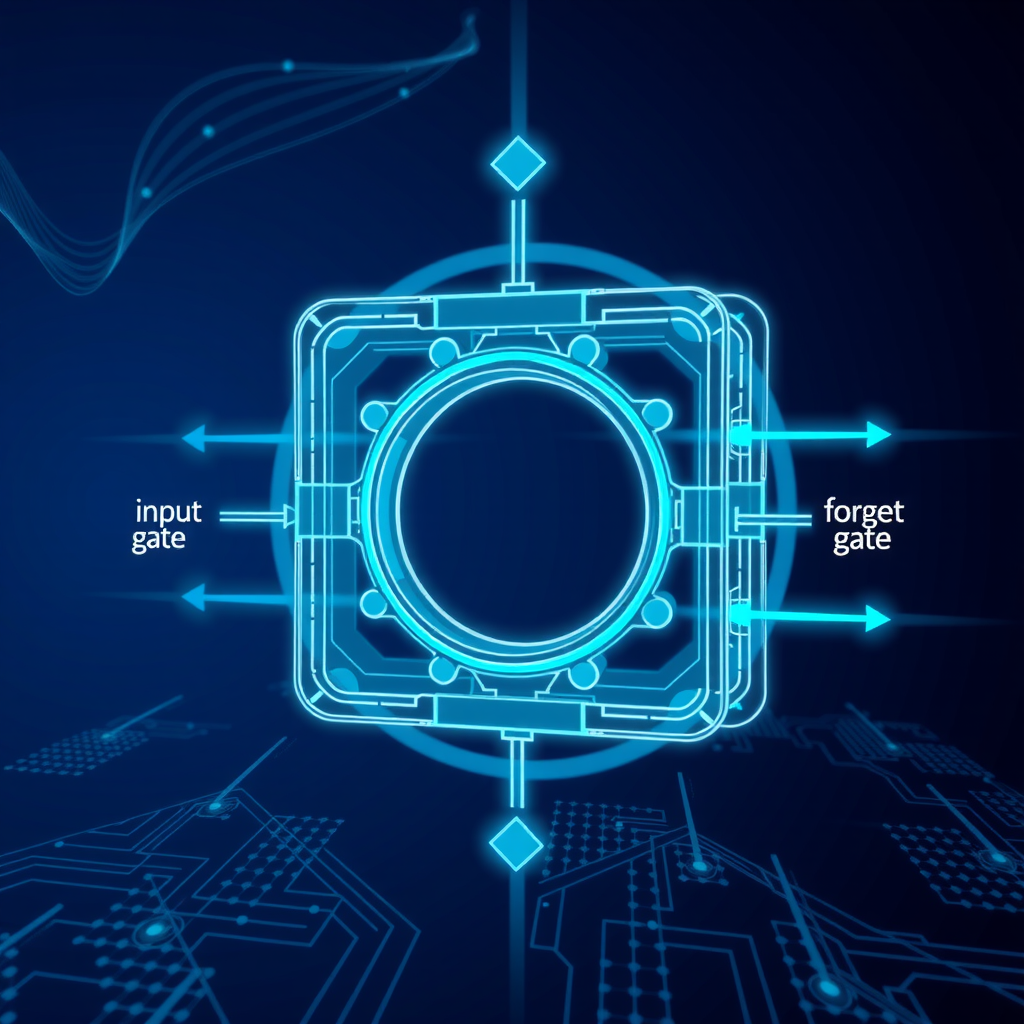

Long Short-Term Memory (LSTM) networks revolutionized sequential data processing when introduced in 1997, and they remain fundamental to financial time series analysis today. Unlike traditional recurrent neural networks that struggle with long-term dependencies,LSTM networksemploy a sophisticated gating mechanism that enables them to selectively retain or forget information over extended sequences.

The LSTM architecture consists of three primary gates that control information flow: theforget gatedetermines what information to discard from the cell state, theinput gatedecides what new information to store, and theoutput gatecontrols what information to output based on the cell state. This gating mechanism allows LSTMs to maintain relevant information over long sequences while filtering out noise—a critical capability when analyzing financial markets where patterns may emerge over varying time horizons.

LSTM Applications in Market Analysis

In practical financial applications, LSTM networks excel at several key tasks. For price prediction, they can learn complex patterns in historical price movements, volume data, and technical indicators. The network's ability to maintain long-term memory enables it to capture market cycles, seasonal patterns, and momentum effects that span multiple trading sessions or even months.

Volatility forecasting represents another crucial application where LSTMs demonstrate significant advantages. Financial volatility exhibits clustering and mean-reversion properties that LSTMs can model effectively. By processing sequences of returns, trading volumes, and market microstructure data, LSTM networks can generate volatility forecasts that inform risk management and option pricing strategies.

Technical Insight:LSTM networks process financial time series by maintaining a cell state vector that acts as a memory mechanism. At each time step, the network receives new market data and updates its internal state through learned transformations. This enables the model to capture both short-term market reactions and long-term structural patterns simultaneously.

Attention Mechanisms: Focusing on What Matters

While LSTM networks provide powerful sequential modeling capabilities, they face limitations when processing very long sequences or when different time steps have varying relevance to the prediction task.Attention mechanismsaddress these limitations by allowing the model to dynamically focus on the most relevant parts of the input sequence.

The attention mechanism computes a weighted sum of all input time steps, where the weights represent the importance of each time step for the current prediction. In financial contexts, this allows the model to automatically identify and emphasize significant market events, such as earnings announcements, central bank decisions, or sudden volatility spikes, while downweighting less relevant periods.

Self-Attention and Multi-Head Attention

Self-attention mechanisms enable the model to relate different positions within the same sequence, capturing complex interdependencies between time steps. For financial analysis, this means the model can learn relationships between market conditions at different points in time—for example, how current price action relates to patterns observed weeks or months earlier.

Multi-head attention extends this concept by computing multiple attention patterns in parallel, each potentially capturing different types of relationships. One attention head might focus on short-term price momentum, another on volume patterns, and a third on longer-term trend reversals. This parallel processing enables the model to simultaneously consider multiple market dynamics.

UK financial institutions implementing attention-based models have reported significant improvements in prediction accuracy for complex tasks such as multi-asset portfolio optimization and cross-market correlation analysis. The interpretability benefits are equally valuable—attention weights provide insights into which historical periods the model considers most relevant for its predictions, offering a degree of explainability often lacking in black-box AI systems.

Transformer Models: The State of the Art in Sequential Processing

Transformer architectures, introduced in 2017 for natural language processing, have rapidly become the dominant approach for sequential data analysis across domains, including finance.Transformersrely entirely on attention mechanisms, dispensing with recurrence altogether, which enables more efficient parallel processing and better capture of long-range dependencies.

The transformer architecture consists of an encoder that processes the input sequence and a decoder that generates predictions. Both components employ multi-head self-attention and position-wise feed-forward networks. For financial time series, the encoder processes historical market data, learning rich representations that capture complex temporal patterns, while the decoder generates forecasts or trading signals.

Positional Encoding in Financial Contexts

Since transformers process all time steps in parallel rather than sequentially, they require explicit positional information to understand temporal ordering. Positional encoding adds information about each time step's position in the sequence. In financial applications, this can be enhanced with domain-specific temporal features such as day of week, time of day, or position within the trading session, helping the model learn time-dependent patterns like intraday volatility curves or end-of-month effects.

Advantages for Financial Analysis

Transformers offer several advantages for financial time series analysis. Their parallel processing capability enables efficient training on large historical datasets, crucial when analyzing decades of market data across multiple assets. The attention mechanism's ability to directly connect any two time steps, regardless of distance, allows the model to capture long-term dependencies without the degradation that can affect recurrent architectures.

Recent implementations in UK financial services have demonstrated transformers' effectiveness for tasks including high-frequency trading signal generation, multi-horizon forecasting, and anomaly detection in market microstructure data. The architecture's flexibility also enables multi-modal learning, where the model can simultaneously process price data, news sentiment, and macroeconomic indicators.

Practical Implementation Considerations for UK Finance Professionals

Understanding these architectures theoretically is essential, but UK finance professionals must also consider practical implementation aspects when evaluating or deploying AI analytics tools based on neural networks.

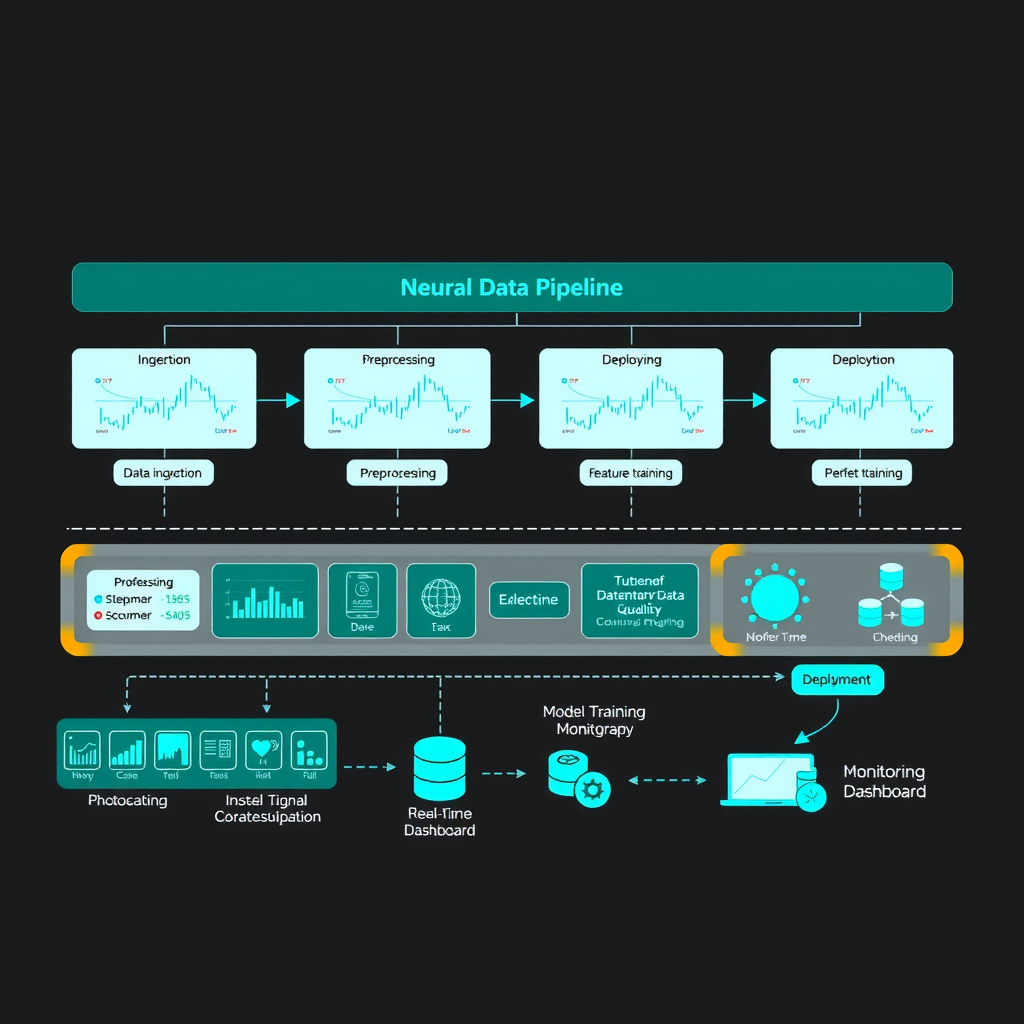

Data Requirements and Preprocessing

Neural network models require substantial amounts of high-quality training data. For financial applications, this means clean, properly aligned time series data with appropriate handling of corporate actions, missing values, and outliers. Data preprocessing typically includes normalization or standardization to ensure numerical stability during training, and careful treatment of look-ahead bias to prevent unrealistic performance estimates.

Feature engineering remains important even with sophisticated neural architectures. While these models can learn complex representations, providing relevant input features—such as technical indicators, market microstructure metrics, or macroeconomic variables—can significantly improve performance and reduce training time. UK practitioners should consider regulatory requirements around data usage and ensure compliance with GDPR and financial services regulations.

Model Training and Validation

Training neural networks for financial applications requires careful attention to validation methodology. Standard cross-validation approaches can introduce look-ahead bias in time series contexts. Instead, practitioners should employ walk-forward validation or expanding window approaches that respect temporal ordering. This ensures the model is evaluated only on data that would have been available at the time of prediction.

Hyperparameter tuning—selecting optimal values for learning rates, network depth, attention heads, and other architectural choices—significantly impacts model performance. UK financial institutions typically employ systematic approaches such as grid search or Bayesian optimization, combined with robust validation procedures to identify configurations that generalize well to unseen market conditions.

Regulatory Consideration:UK financial regulators increasingly scrutinize AI-driven trading and risk management systems. Practitioners implementing neural network models should maintain comprehensive documentation of model development, validation procedures, and ongoing monitoring processes to demonstrate appropriate governance and risk management.

Evaluating AI Analytics Tools: Key Questions for Practitioners

Armed with technical understanding of neural network architectures, UK finance professionals can more effectively evaluate commercial AI analytics tools and vendor claims. Several key questions should guide this evaluation process.

Architecture and Methodology

What specific neural network architecture does the tool employ? Understanding whether a system uses LSTMs, attention mechanisms, transformers, or hybrid approaches provides insight into its capabilities and limitations. How does the system handle different market regimes and structural breaks? Financial markets undergo periodic regime changes, and robust systems should demonstrate adaptability to these shifts.

What validation methodology was used to assess performance? Vendors should provide evidence of rigorous out-of-sample testing using appropriate time series validation techniques. Be wary of systems that report only in-sample performance or use validation approaches that could introduce look-ahead bias.

Interpretability and Explainability

Can the system provide explanations for its predictions or recommendations? While neural networks are often characterized as black boxes, modern architectures incorporating attention mechanisms can offer meaningful interpretability through attention weight visualization and feature importance analysis. For UK financial applications, particularly those subject to regulatory oversight, some degree of explainability is increasingly important.

Operational Considerations

What are the computational requirements for model training and inference? Neural network models, particularly large transformers, can be computationally intensive. Understanding infrastructure requirements helps assess total cost of ownership and operational feasibility. How frequently does the model require retraining? Financial markets evolve continuously, and models may need periodic retraining to maintain performance.

What monitoring and alerting capabilities does the system provide? Robust AI analytics tools should include mechanisms to detect model degradation, data quality issues, or unusual predictions that warrant human review. This is particularly important for systems used in automated trading or risk management applications.

Future Directions and Emerging Trends

The field of neural networks for financial time series analysis continues to evolve rapidly. Several emerging trends warrant attention from UK finance professionals seeking to stay ahead of developments in AI technology services and AI analytics tools.

Hybrid Architectures

Researchers and practitioners increasingly combine different neural network architectures to leverage their complementary strengths. For example, systems might use convolutional layers to extract local patterns from price charts, LSTM layers to model temporal dependencies, and attention mechanisms to focus on relevant time periods. These hybrid approaches often outperform single-architecture models for complex financial forecasting tasks.

Multi-Modal Learning

Modern financial analysis increasingly incorporates diverse data sources beyond traditional price and volume data. Neural network architectures are being adapted to simultaneously process numerical time series, text data from news and social media, and even audio from earnings calls. Transformers are particularly well-suited to this multi-modal learning paradigm, as their attention mechanisms can learn relationships across different data types.

Federated Learning for Financial Applications

Privacy concerns and competitive considerations often prevent financial institutions from sharing proprietary data. Federated learning enables multiple institutions to collaboratively train neural network models without sharing raw data, potentially improving model performance while maintaining data confidentiality. This approach is gaining traction in UK financial services for applications such as fraud detection and credit risk modeling.

Looking Ahead:As neural network architectures continue to advance, UK finance professionals should maintain awareness of emerging techniques while critically evaluating their practical applicability. Not every architectural innovation translates to improved performance in financial contexts, and the most sophisticated model is not always the most appropriate for a given application.

Conclusion: Informed Evaluation and Strategic Implementation

Neural network architectures—from LSTM networks through attention mechanisms to transformer models—represent powerful tools for financial time series analysis. For UK finance professionals, technical understanding of these architectures enables more informed evaluation of AI analytics tools and more strategic decisions about their implementation.

The key to successful adoption lies not in blindly embracing the latest architectural innovations, but in understanding how different approaches align with specific business requirements, data characteristics, and operational constraints. LSTM networks may suffice for many applications, while more complex transformer architectures might be warranted for tasks requiring analysis of very long sequences or multiple data modalities.

As AI technology services continue to evolve and new architectures emerge, the fundamental principles outlined in this article—understanding how models process sequential data, capture temporal dependencies, and focus attention on relevant information—will remain essential for critical evaluation and effective implementation. UK financial institutions that invest in building this technical literacy across their organizations will be better positioned to leverage AI-driven market analysis tools effectively while managing associated risks appropriately.

The future of financial analysis increasingly involves sophisticated neural network architectures, but success requires more than just deploying advanced technology. It demands a combination of technical understanding, domain expertise, rigorous validation, and thoughtful integration with existing processes and systems. By developing this comprehensive perspective, UK finance professionals can harness the power of neural networks while maintaining the critical judgment necessary for sound financial decision-making.